- Anúncios -

O que significa fine?

Fine, um termo com raízes no francês, pode ser traduzido como "bem" ou "bom". No entanto, seu significado vai muito além. Fine pode ser um adjetivo que descreve algo de qualidade superior ou um substantivo que se refere a uma multa. Seja na culinária refinada ou ao receber uma penalidade, fine possui diversos contextos e nuances, acrescentando um toque especial…

O que significa report?

O termo 'report' intriga muitas pessoas, mas sua definição é simples: relatar, informar, transmitir uma descrição detalhada sobre algo ou alguém. Contudo, este ato aparentemente simples pode revelar histórias poderosas e desempenhar um papel fundamental na sociedade.

O que significa motivation?

Motivação é a chama interior capaz de mover montanhas, impulsionar sonhos e transformar a realidade. É a força que nos faz sair da zona de conforto e buscar incessantemente o sucesso. Mas, afinal, o que significa motivação? É o combustível da alma, é o desejo que nos impulsiona a agir, é o brilho nos olhos diante de desafios. É encontrar…

Medicina

O que significa transtorno de ansiedade generalizada?

O transtorno de ansiedade generalizada é uma condição que afeta milhões de…

Create an Amazing Newspaper

Siga-nos

Precisa ler

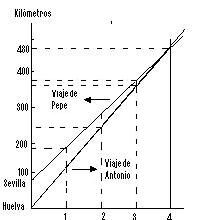

O que significa equações polinomiais?

Você já se perguntou sobre o significado das equações polinomiais e como…

O que significa dividendo?

Você já se perguntou o que significa dividendo? Essa é uma palavrinha…

O que significa associação na matemática?

Já se questionou sobre o que significa associação na matemática? Muito além…

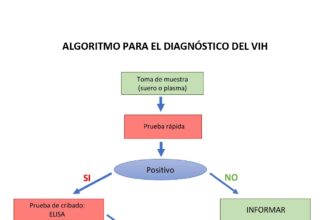

O que significa algoritmo na matemática?

Algoritmo na matemática é como uma incrível coreografia numérica, uma dança entre…

O que significa propriedades matemáticas?

Você já se perguntou o que significa propriedades matemáticas? Neste artigo, exploraremos…

O que significa cálculo?

Cálculo, um termo que provoca curiosidade e talvez até um certo temor…

O que significa expressão numérica?

A expressão numérica, um enigma matemático envolto em símbolos, números e operações.…

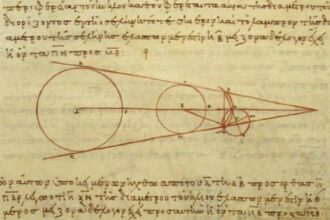

O que significa relações trigonométricas?

Você já se perguntou o que significa relações trigonométricas? Esses conceitos matemáticos,…

Create an Amazing Newspaper

Conteúdo patrocinado

O que significa capitalismo?

O que significa capitalismo? É um conceito que se desdobra em um verdadeiro oceano de interpretações e debates. Desde sua origem na Revolução Industrial até os dias atuais, o capitalismo abrange os pilares da propriedade privada, da livre iniciativa e do mercado competitivo. Mas, será que esse sistema econômico tem se mostrado eficiente e sustentável para todos? Exploraremos essas questões e muito mais neste artigo, mergulhando nas profundezas desse complexo sistema que molda nossa sociedade contemporânea.

O que significa a sigla LGBTQIA+?

A sigla LGBTQIA+ representa a diversidade de identidades de gênero e orientações sexuais presentes na sociedade. Cada letra abraça uma expressão única, construindo uma comunidade unida em busca de igualdade…

Top Autores

Stay Up to Date

Subscribe to our newsletter to get our newest articles instantly!